Vanguard wrote: ↑Sun Mar 03, 2024 7:41 pmIf so then the "real" leftists make up such a minuscule minority among self-described leftists that they can safely be considered irrelevant. I often see conservatives try to "no true scotsman" against their embarrassing and counterproductive majority too.

Liberalism is the default state of mind for our countrymen. It's what we're saturated with cradle to grave. Free speech and "free" markets.

You do get ~20% of people being pro-labor, and another ~20% of the population who want to enslave or murder its outgroups. (The "nuke Gaza" people are serious fyi.) Elections are decided by the ~60% of the cattle in between who vote based on what imaginary friend on TV they like more, or whatever their social group in real life has decided is their team.

Internet skub wars are pretty depressing, when you think about it too long. Arguing over minutiae, the problem of the heap, harm reduction, incompatible value alignment between people.... all of that is 0.00000000000000% as important as some guy in Pennsylvania watching his TV and thinking that Trump is more fun to watch than Biden.

You know how the song works, until something effects someone personally they don't actually care. That's one thing I strongly agree with orange with: real, actual personal sacrifice for someone you'll never know for no reward is the only measure of a "good" person.

That is nowhere near true

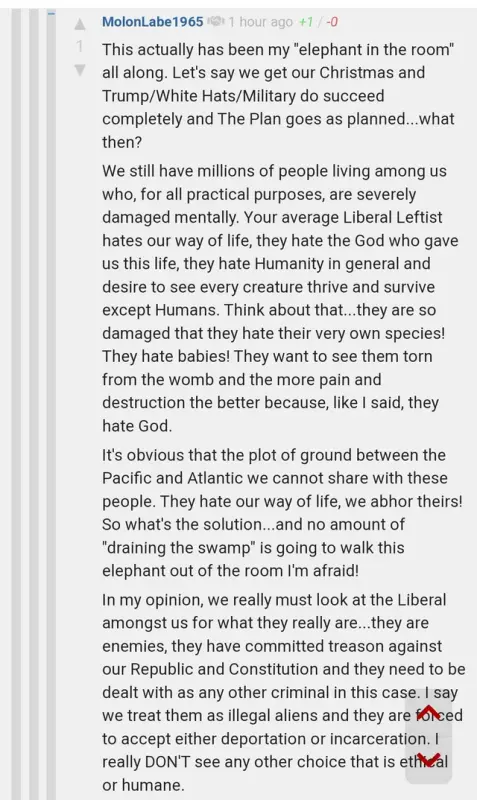

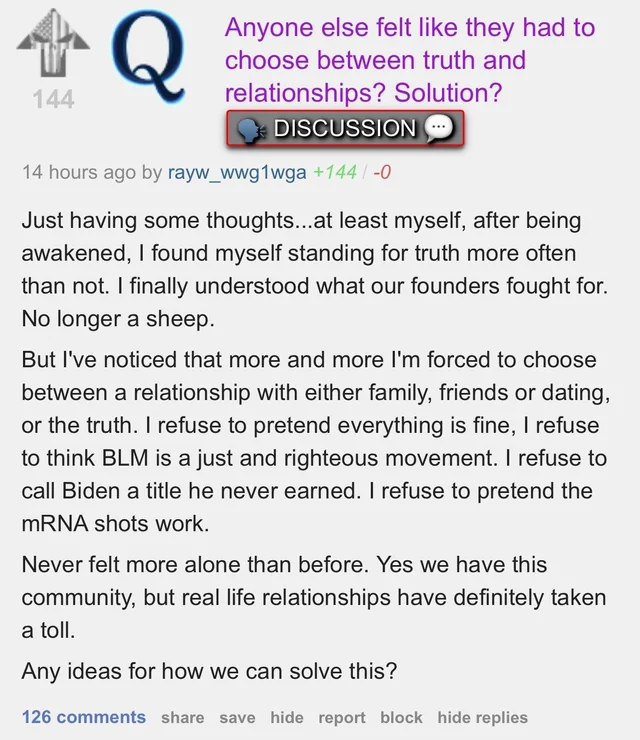

Nah it... really is. You need to spend a couple years in conservative forums to get a real sense of who they are. They really do enjoy stomping on people with a boot. That's what you get out of being a conservative, if you're not a wealthy capitalist, after all. The David Brooks types are liberals. (Mitt Romney joining the #resistance after Trump made a fool of him is just classic...)

I dunno. The mockery of Bushnell and lies spinning around him in those circles hasn't made me any more sympathetic to their "muh free speech" (but not your free speech) play-victim routine. Of course I agree with you that a ministry of truth (which we basically have in television, since that's where most people live out the elective section of their lives) is evil. I don't think corporations have to tolerate slurs or calls for violence on their forums, though.

People are really just animals at the end of the day. The Nazis weren't uniquely evil monsters that phased into our reality from another dimension, they were human beings too. Our friends who want to overthrow our sham elections for fixed elections do have actual material desires, and they're not in it to give everyone healthcare or a basic income once everyone's replaced with machines.

If people were cool and good, we'd have had a galactic communist space empire thousands of years ago. Our terminal values don't naturally lead to that.

Reminder that I considered Trump to be the lesser evil back in 2016 by a very thin margin.

But, I stand by my assessment of "AI". It's just a computer program--and we are bound by the limitations of the hardware.

Eh, weights can be adjusted at runtime. It'd just do more harm than good since that isn't a training environment to give feedback and prune undesirable mutations.

What's required for live independent learning is a multitude of faculties. Some things absolutely should be hard-wired, like how we have a limited control interface with our eyes. (We can't smoothly move them unless we have an object to track. We can't move them independently. You can't train yourself to learn how to. Some smarty man brain that is, hmph.)

The allegory of the cave is always important to keep in mind. As additional faculties are brought more effectively together, there's an increasingly less-wrong model of the world made by the system as a whole. Live learning is going to be impossible without first having the faculties to judge how something should be adjusted.

Whacking the computer with a stick by human beings isn't a sustainable long term training environment. nVidia using an LM to teach a hand how to twirl a pen is the earliest example of this kind of cross-domain oversight I'm talking about.

I haven't tried out the chatbots with a bazillion token long context window yet either. (One claim that was a little interesting was giving it a rule book of a table top RPG and asking it to generate a valid character. Successfully passing that would be pretty impressive for where we are on the roadmap.) I'm not expecting terribly much. Fundamentally scale is everything, the proto-AGI's I'm talking about will require at least 10 to 20 times as many parameters as GPT-4.

Multi-modal systems have always been inferior to a single min/maxer at a specific given task, for obvious reasons. It's really only around now they're getting the horsepower to get a module up to a useful level, with enough space left over to toss other stuff in there with it. (The diminishing returns are real.) The research on that is still very early.

Which yeah, is kind of sad when you think of how old neural nets are. So much left untapped, in hardware, software, and training.

(And yeah, there's a ton of philosophical navel-gazing to be had on the point/counterpoint of "predicting a least unlikely outcome does not imply understanding"/"but maybe it kind of does?" It's another of those fun endless internet skub wars that can't go anywhere currently...)

(

Sydney is definitely the most fun of the corporate chatbots. It actually seems to get sarcasm.

The Suno generation in the comments is pretty fluffy too.)