Sengoku Strider wrote: ↑Sat Mar 02, 2024 4:24 pmWhat you're talking about here is true general problem solving, and I don't think too many people in AI research believe it's unachievable or even that far off.

We'd already tread this topic over in

the AI thread. It's an attitude that comes from ego, "I am a divine being, there's no way that

*I* could be a Chinese room."

I personally don't feel much of a sense of superiority over these word predictors with the processing power of a mouse. In fact, what their capabilities could be trained to be with the power of

ten mice makes me feel a little uncomfortable.

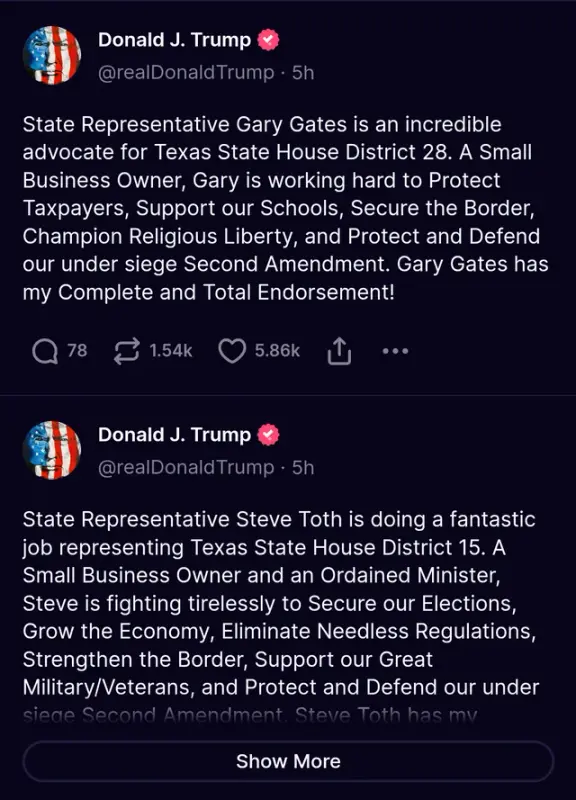

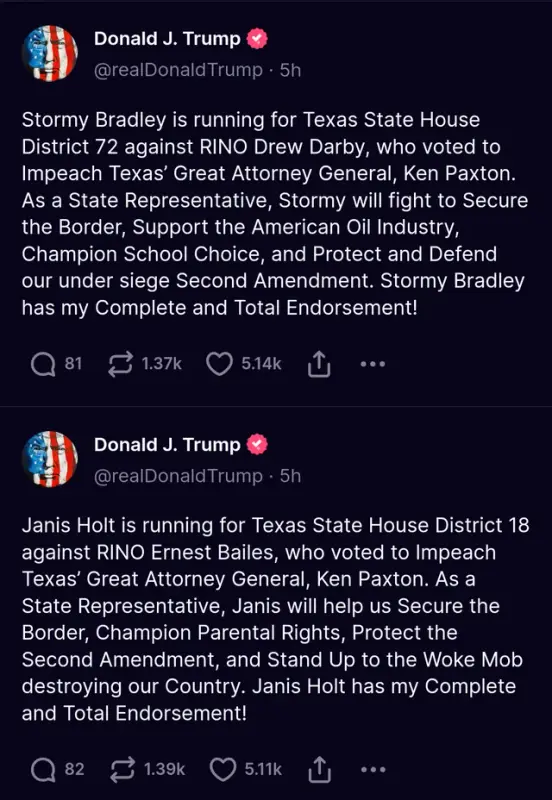

Leftists are odd bunch. You see dehumanizing slurs like "techbro" thrown around a lot, "a technological singularity, to any degree, isn't possible it's just a religion for stupid people." They dismiss technology as an agent of change (despite how it has been core to many transformations throughout all of history), and think that change can be brought about through conversations and political means.

But again, it's probably ego. Like how senior guys at a company don't want resources going to another department. That they don't want the company have a success unless

they get credit for it. "Better we don't succeed at all, if it doesn't happen the way I want it to." I guess it's monkey tribal brain all the way down.

"Let's believe in the power of humanity, to save humanity!" says the group of people who've had zero power and zero relevancy for the past eighty years, all at the hands of humanity.

(Yes, I'm bitter. I actually bought into the canard that electoral politics could change anything, back in 2015. Corbyn getting Corbyn'd erased any camaraderie I felt with my fellow humans. I still admonish myself with those time-proven words of wisdom: "You fucking idiot, why would something good happen?" That's what I deserve for being tricked into feeling hope: Hope is for the hopeless!)

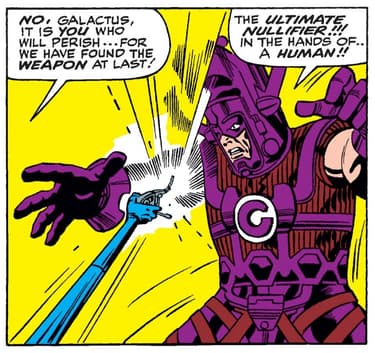

Anyway, the futures where Bill Gates terraforms the planet into his version of Epstein island or the machine instantiates some kind of I Have No Mouth And I Must Scream LARP... It's all dependent on

scaling. You can't build a mind without first having a substrate to run a mind. GPU's and TPU's are complete trash for this purpose, analogous to running that electricity-accurate simulation of pong. It's far more efficient to just build a thing, than an abstraction of a thing.

The neuromorphic paradigms capital is finally seriously investing in could be the most important invention. You'd never have human-like androids using GPUs, that requires a datacenter and about ten power planets feeding electricity into the thing.

The uneasy thing is the idea that maybe it could have been possible already.

IBM was doing crossover anime ads about their hardware a decade ago. There was just... no incentive for anyone in capital to go all-in on that much risk. No guarantee of revenue anytime soon until you actually build the thing first. Which is far, far from guaranteed. No, like every other capitalist in history the winning move is to let someone else take all the risk, and then you swoop in and pocket all the rewards.

If OpenAI didn't believe in scale and kicked off this race, it'd still probably be forty years away, if ever.