Well, bit of an opportunity to do a end-of-year review then

I think some more fundamental research was done recently to help OLED longevity (I think it was along the lines of preventing attack on some part of the structure, like the p-type (hole) semiconductor, something along those lines. But then there's more uncertainty: How soon does this filter into the shipping technology?

Samsung promised something like 100,000 hours on their last models, or 10-20 years - enough for them to show a kid growing up and bequeathing the gift of ancient technology. So, let's talk about the elephant in the room, how monitor types are used and bought:

In this kind of enthusiast space we like to talk about reliability and high quality, but for many people that will be irrelevant because that's not the choice you get when replacing a TV. Old technology won't be useful with coming developments, and it's not just companies that choose cheap technologies - most buyers do too. I don't expect that most people don't care about reliability and quality when given the choice - but we all know that pushes for higher resolution and HDR material means that today's stuff won't be future proof, and indeed future stuff may be intentionally obsoleted as well, as long as the companies can keep the refresh cycle going.

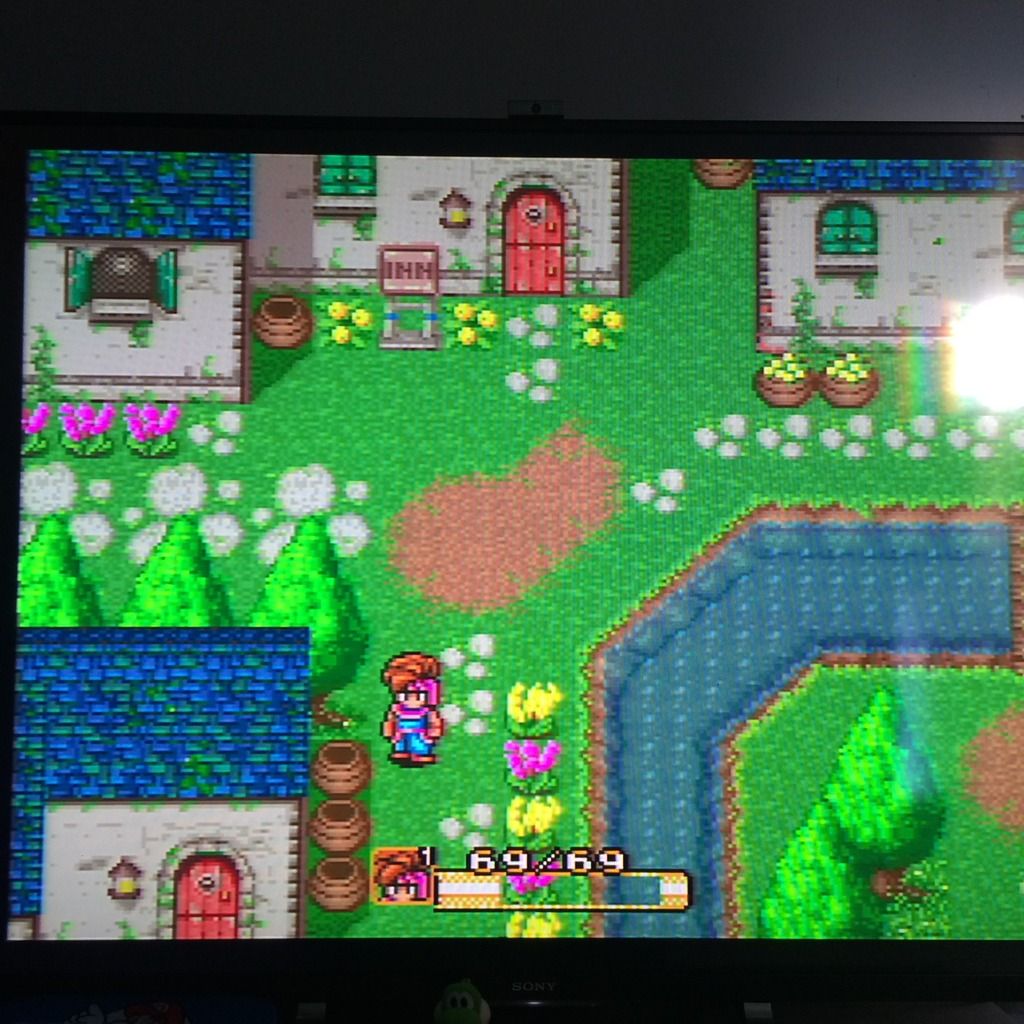

For most buyers, you simply just buy something and keep using it until it breaks, at which time you probably have to buy an entirely new ecosystem. For most people this is okay because who plays SNES anymore?

Alternatively, if you have a bit more cash to throw around, you can get into the upgrade cycle before the old set breaks. This is not even a growth opportunity for companies - they simply want to keep pace with the economy despite the saturation of the market with TVs after a pretty sizable outlay by all parties after the analog to digital transition. If companies don't find a reason to keep people turning over recent sets, they stand to have severely restrained cashflow. If anything, this would only allow more growth opportunities by trying to find new markets with even cheaper barriers to entry - digital signage grass, maybe? Or panels for "smart" alarm clocks?

A lot of technology watchers are highly anticipating 2016 for a number of developments in feeding new panel types with content: Intel vs. AMD in CPUs, and AMD vs. nVidia in GPUs. I would add that Intel iGPU vs. nVidia dGPUs may prove to be more important. Alternate Frame Rendering (i.e. SLI and Crossfire) also have an important year coming. Unfortunately, people looking to future proof purchases can't look to standards like DisplayPort 1.3 to signpost the way (adaptive sync is still optional in DP 1.3) and while we would like to see the rise of 4K and HDR in displays for PCs, it will likely be a long wait for anything that's consumer affordable.

So, back to reality and boring price structures. Right now the market seems to look something like this:

1080p LCDs - still going to be a large number of shipments. The resolution makes money so companies continue to order sizable shipments of panels, with most changes probably just happening in choosing different panel sizes.

4K LCDs - encroaching into the space. After Marqs' response to my question about building a scaler, I had to think "it's almost as if the big manufacturers have to keep pushing for something more difficult to make in order to keep the small guys out." Of course, the competition isn't (yet) Marqs and enthusiasts, but rather the huge number of OEMs and "display manufacturers" in the space. Old-timers like Sony and Panasonic are joined not only by one-time parts Korean parts makers LG and Samsung, but also by entirely new brands - Vizio in the US, and even companies like Haier from China. Haier? I still can't believe they make anything other than cheap portable refrigerators, but here we are. With everybody ordering panels from the same sources, there's probably very few meaningful opportunities for product differentiation, but there is a lot of room to fall behind - on the other hand, maybe not, since a lot of the "choices" display manufacturers face are probably identical as those of their competitors who may well have identical panels in some of their products.

Those two panel types represent a good investment for companies: You need 1080p to capture most of the buying public, and you need 4K to stay competitive - 4K isn't a product differentiator, of course, and I don't expect HDR to be either.

Now onto plasma and OLED:

Plasma simply sounds not to have been a good investment for its makers: They are keen to say that popular demand was dropping off, but I also think that regulatory pressures and just the expense of building them meant that they were unpopular. Heavy weight also isn't so good here.

I've read a variation on the old comment "the perfect is the enemy of the good" in relation to OLEDs. OLEDs' main problem right now is that little advances in LCD technology coupled with an apparently quite big price difference in production means that OLEDs are less cost effective to make for large screen (larger than a smartphone, and maybe even there too). That, to me, seems like enough of a problem to drive the whole story.

If you are a PC gaming enthusiast, in particular, there's little in this story to be hopeful about. The story of early G-Sync and FreeSync displays was "$700+ for a TN panel?" OLED is even further away; we can't expect weird resolutions to get pushed out just for gaming monitors, at any price. Now, I'm hopeful that OLED panels should be easily enough pushed into high framerate roles at common sizes and at common resolutions (probably 4K or higher) and I'd be happy for that. However, until they can be made for desktop use, I don't see anybody making the investment when even capturing the relatively huge TV market has been such a battle for OLED. Until and unless prices come down, OLED is not a winner for PC gamers.

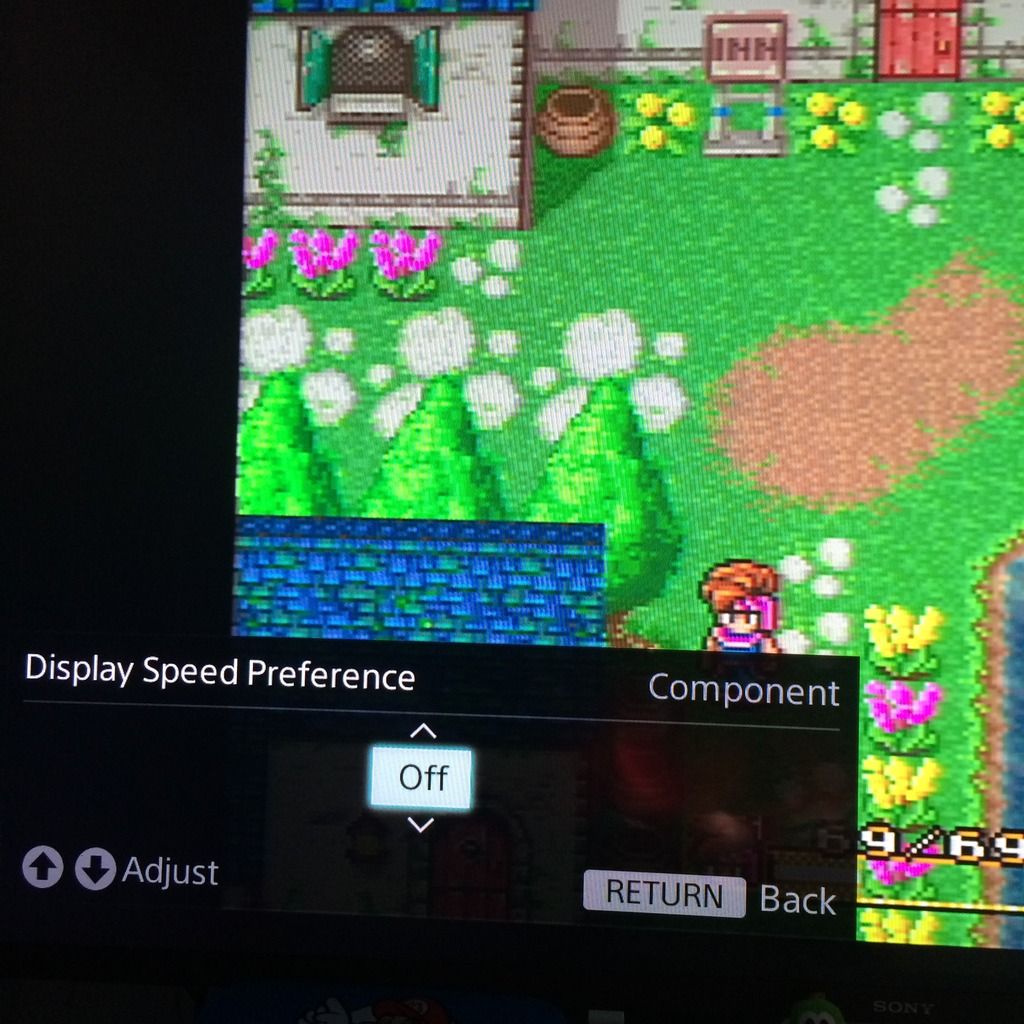

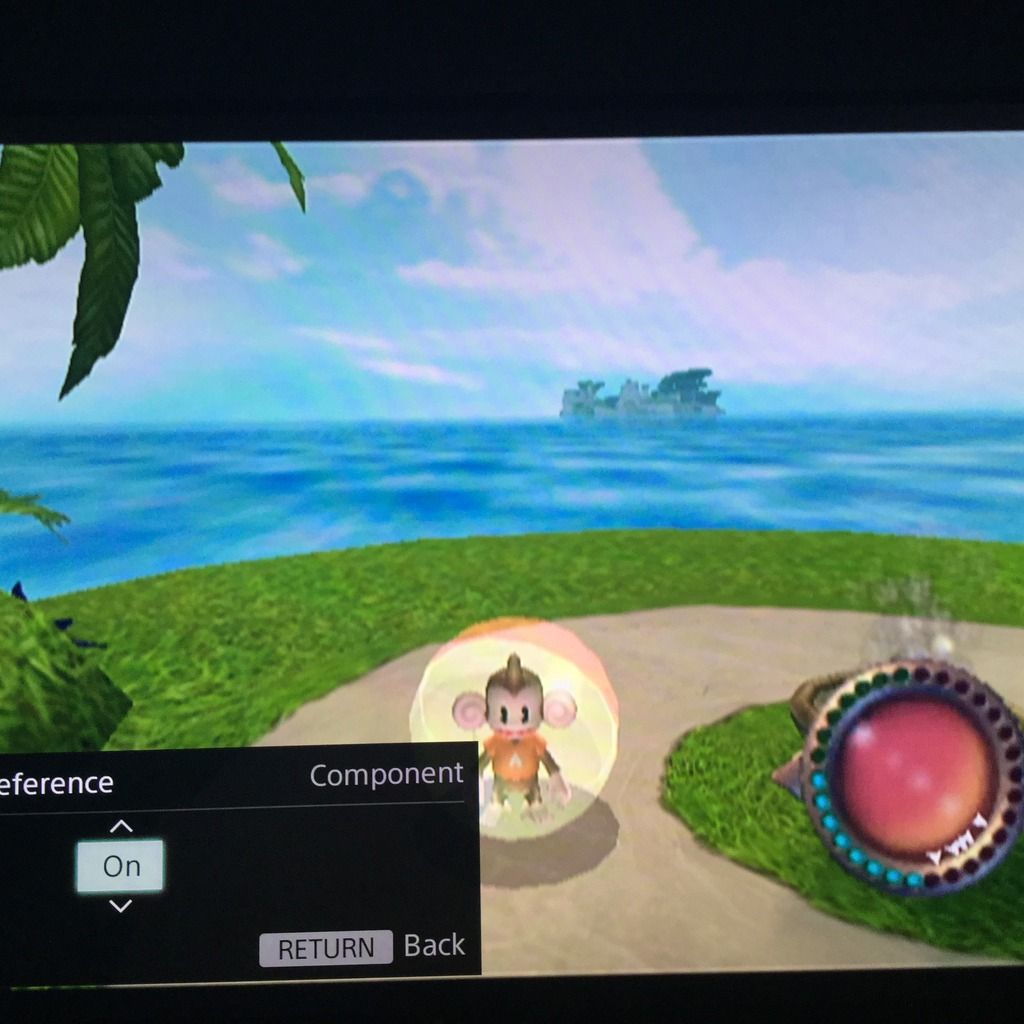

144Hz+ 1440p panels probably represent the best choice for many PC users, and not just gamers, but with a ugly scaling resolution for 1080p content (but perhaps better for scaling in emulation of older systems). This is a 'luxury' specialty panel type and we can't expect significant price drops unless everybody suddenly decided they need one for productivity...which I don't see happening as the bean counters control corporate spending. If there is a silver lining, I expect that 4K panels will crowd out this technology, and by then hopefully we will have better size choices and GPUs to power them, and maybe even good content scaling options.